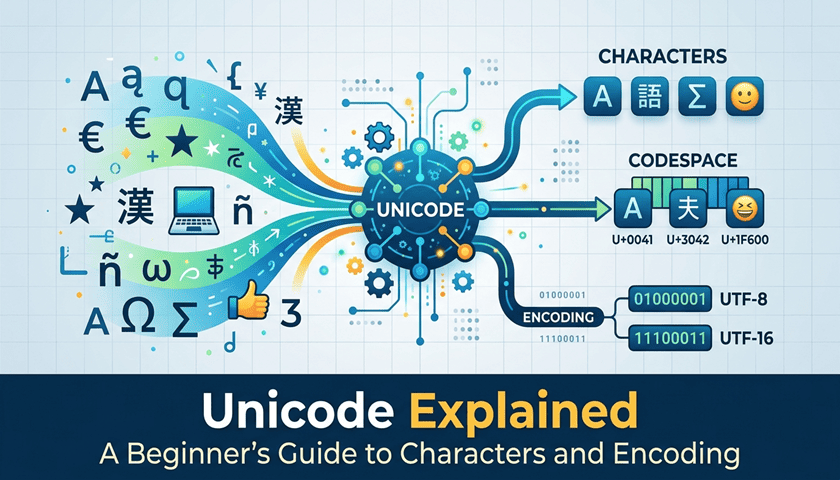

Ever wonder how your phone displays Japanese characters, Arabic script, and 🎉 emojis all in the same message? The answer is Unicode — and once you understand it, a lot of the "magic" behind your screen starts to make sense.

So, What Exactly Is a Character?

A character is any symbol used in written communication — letters, numbers, punctuation, and yes, emojis. Simple enough, right?

The tricky part is that the world uses a lot of different writing systems. English uses the Latin alphabet, Japanese uses Kanji and Hiragana, Arabic reads right to left, and so on. Computers needed a way to handle all of them — without getting confused.

The Problem Unicode Was Built to Solve

In the early days of computing, different systems handled characters in their own way. Text written on one computer could show up as complete nonsense on another. It was a mess.

The fix? Give every single character in every language its own unique number — called a code point. That's essentially what Unicode is: a giant, universal dictionary that matches characters to numbers, so every device reads text the same way.

Before Unicode, older systems like ASCII could only handle 128 characters — great for English, useless for most of the world.

How Unicode Actually Stores Text: Meet UTF

Assigning numbers to characters is one thing — storing them efficiently is another. That's where UTF (Unicode Transformation Format) comes in. There are three main versions:

- UTF-8 — The most popular by far. It's flexible: simple English characters take up just 1 byte, while more complex characters use more. It's why most websites use it.

- UTF-16 — Uses more space but handles complex scripts more naturally. Common in apps and documents with lots of non-English text.

- UTF-32 — Every character gets the same amount of space (4 bytes). Consistent, but uses more memory than necessary for most uses.

Think of it like packing a suitcase: UTF-8 packs light when it can, UTF-32 always uses the same-sized box no matter what's inside.

Wait — Emojis Are Part of Unicode Too?

Absolutely! Every emoji has its own Unicode code point, just like the letter "A" does. That's why you can send a 🍕 from an iPhone and your friend sees the same pizza on their Android. Unicode made emojis a shared language across every device and platform.

What This Means for Developers

If you're building software, Unicode isn't optional — it's essential. Any app that handles text (which is basically all of them) needs to account for character encoding when storing data, building interfaces, and processing user input.

The good news? Most modern programming languages handle Unicode automatically. The challenges usually show up when working with older systems, or when data moves between different encoding formats.

The Bottom Line

Unicode is quietly working behind the scenes every time you read a text, browse the web, or fire off an emoji. It's the reason our digital world can handle thousands of languages and symbols without falling apart.

It's not the flashiest technology — but without it, the internet would be a very confusing place.